A Comparative Study of Agentic Frameworks and Multi-Agent Orchestration

Abstract

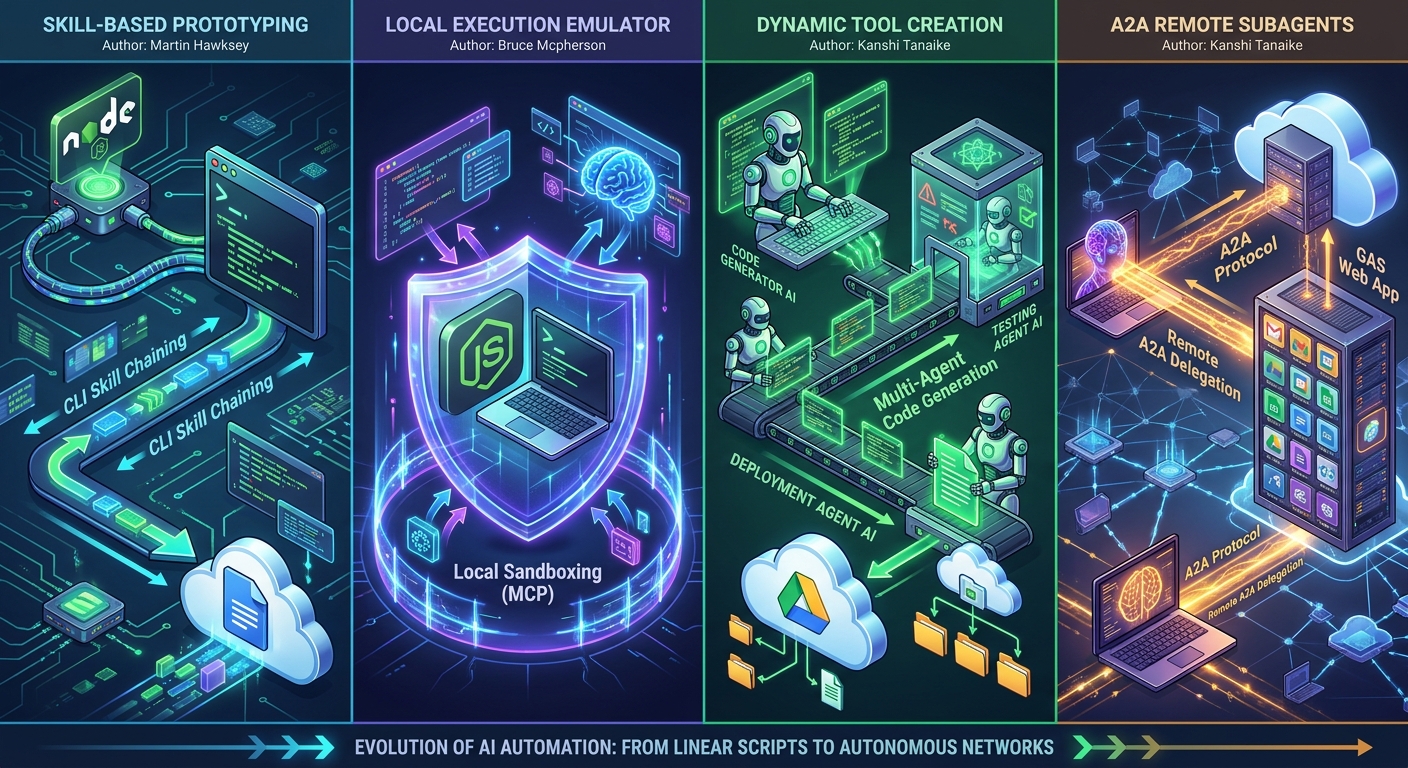

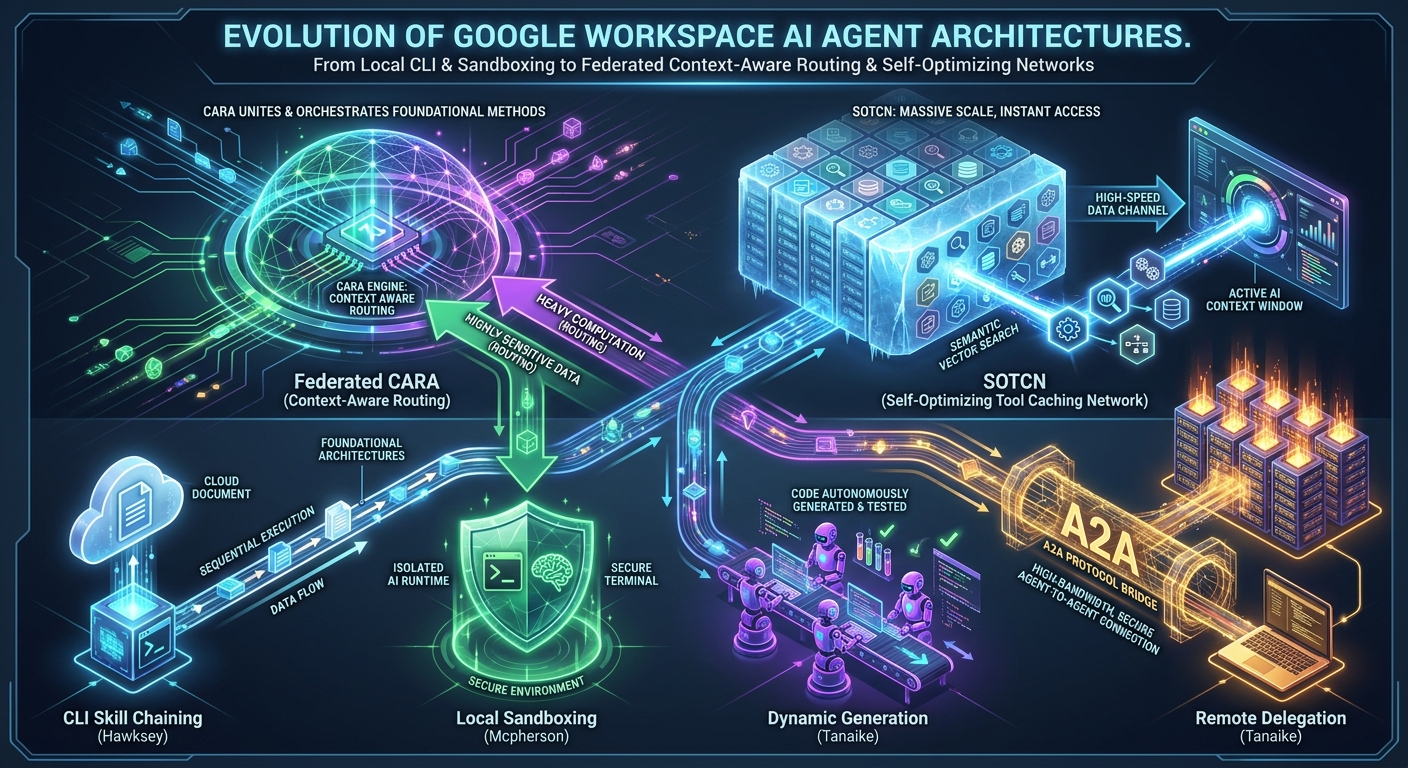

The transition from passive chatbots to autonomous execution environments was cemented at Google Cloud Next ‘26 with the introduction of the Gemini Enterprise Agent Platform. This paper evaluates four cutting-edge AI agent methodologies for Google Workspace automation, developed by leading developers Martin Hawksey, Bruce Mcpherson, and Kanshi Tanaike. We deconstruct their structural approaches—CLI skill chaining, advanced emulation sandboxing, dynamic code generation, and A2A remote delegation—demonstrating how these community-driven innovations anticipated native Next ‘26 features like the official Agent Skills repository and Model Context Protocol (MCP) support. Building upon these foundations, we propose two novel frameworks: the Federated Context-Aware Routing Architecture (Federated CARA) for zero-trust, multi-cloud task routing, and the Self-Optimizing Tool Caching Network (SOTCN) to eliminate Tool Space Interference using dynamic semantic caching. This comparative synthesis maps existing and proposed models against Google’s new enterprise standards, offering a scalable roadmap for secure, highly dynamic multi-agent orchestration.

1. Introduction

Historically, automating tasks within Google Workspace relied heavily on static, hardcoded macros defined via Google Apps Script (GAS) and rigidly scheduled cloud triggers. While highly effective for predictable workflows, this paradigm lacked the adaptability required for complex, context-dependent enterprise operations. The advent of Large Language Models (LLMs) catalyzed the transition toward the “Agentic Enterprise,” wherein AI entities act as autonomous orchestration layers capable of interacting dynamically with vast API ecosystems. At Google Cloud Next ‘26, this shift was cemented through the introduction of Workspace Intelligence, a semantic unifying layer that allows agents to autonomously execute multi-step tasks across Gmail, Docs, Sheets, and Drive without manual context provisioning.

However, bridging LLM reasoning engines with Google Workspace APIs introduces profound architectural challenges, notably regarding execution latency, security perimeters, and Tool Space Interference (TSI). TSI—officially recognized by Google as “context bloat”—is a phenomenon where an agent’s reasoning accuracy degrades, and token costs skyrocket, when its context window is overloaded with a massive library of predefined functions.

This paper analyzes four prominent, recently published developer methodologies that sought to solve these challenges before they were addressed natively. By evaluating these frameworks against Next ‘26 announcements like the GKE Agent Sandbox , native Agent Registry , and the Agent Gateway, we identify key strengths and limitations. Subsequently, we propose advanced hybrid frameworks—Federated CARA and SOTCN—tailored to leverage Google’s new native security and orchestration layers for the next generation of highly secure, scalable enterprise deployment.

2. Architectural Analysis of Existing Methodologies

The automation of Google Workspace using AI agents is an emerging field characterized by highly divergent architectural philosophies. The following sections provide an exhaustive deconstruction of four primary paradigms.

2.1 Skill-Based Prototyping Using CLI Integrations (Martin Hawksey)

Ref: gws-web-to-doc

Martin Hawksey’s project (gws-web-to-doc) demonstrates how discrete, legacy command-line tools can be wrapped into modular AI skills. By combining local Node.js execution with the Google Workspace CLI (gws) and remote GAS deployment, Hawksey creates a linear execution pipeline. For instance, in his “Web-to-Doc” workflow, local scripts extract web content, the CLI natively converts the Markdown to a Google Document, and an API Executable GAS script (resizer.gs) handles precise image formatting.

- Primary Strength: Excellently bridges legacy scripting and modern LLM orchestration, making isolated tasks readily accessible to AI.

2.2 Local Natural Language Execution via Emulation (Bruce Mcpherson)

Ref: gas-fakes agent: local natural language requests against workspace resources

Ref: gf_agent - Google Apps Script Local Automation Agent

Bruce Mcpherson addresses iteration latency and cloud security through his gas-fakes-agent framework. By utilizing the gas-fakes emulation layer, Mcpherson enables an LLM to dynamically generate GAS syntax and execute it within a Node.js environment that can be run locally or containerized in any cloud platform. Because it uses Google APIs under the hood, standard network and API latencies still apply, and the files manipulated remain cloud-based just like native GAS. The framework is highly versatile—it can be deployed across multiple cloud platforms (aligning with the modern emphasis on sovereign clouds) and can operate and mix multiple backends, such as Microsoft OneDrive and Office files. Crucially, the gf_agent approach is made possible by continuous learning from over 10,000 tests that gas-fakes uses to ensure its consistency with live Apps Script. This foundation allows the agent to self-augment through continuous cyclical feedback as new methods are introduced.

- Primary Strength: Advanced execution sandboxing and robust permission management. Its security paradigm emphasizes secure sandboxing rather than authentication per se. While it utilizes the same authentication mechanisms available to any OAuth2 protected app—such as Application Default Credentials (ADC), Domain Wide Delegation (DWD), and keyless workload identity federation—it uniquely takes care of the permission complications associated with them. Furthermore, its native compatibility with the Model Context Protocol (MCP) sets a standardized foundation for AI-to-tool interfacing.

2.3 Dynamic Tool Creation to Combat Tool Space Interference (Kanshi Tanaike)

Ref: Empowering Autonomous AI Agents through Dynamic Tool Creation

Ref: autonomous-google-workspace-agent

To solve the cognitive bottleneck of Tool Space Interference (TSI) in enterprise LLMs, Kanshi Tanaike’s autonomous-google-workspace-agent introduces a fully autonomous, self-healing multi-agent architecture. When faced with an edge case lacking a predefined solution, a Senior Orchestrator coordinates five sub-agents (Environment Checker, Script Writer, Script Executor, Script Uploader, and Summary Agent) to write, sandbox-test (using gas-fakes), deploy (via clasp), and execute custom tools in real-time.

- Primary Strength: Fundamentally eliminates TSI by relying on dynamic tool generation rather than a saturated context window. It serves as a secure “kill switch” against reasoning drift by isolating untrusted code generation within a sandbox before cloud deployment.

2.4 Remote Subagent Integration via the A2A Protocol (Kanshi Tanaike)

Ref: Integrating Remote Subagents Built by Google Apps Script with Gemini CLI

Ref: gemini-cli-gas-a2a-subagents

In a subsequent approach (gemini-cli-gas-a2a-subagents), Tanaike solves TSI through remote delegation rather than dynamic generation. A primary LLM offloads a massive repository of over 160 established Workspace skills to a specialized Google Apps Script Web App using the Agent-to-Agent (A2A) protocol. Tanaike elegantly bypasses strict Google Cloud cross-domain (CORS) authentication hurdles by predefining “agent cards” locally within the Gemini CLI.

- Primary Strength: Preserves the primary agent’s reasoning stability without the compute overhead of writing new code, allowing frictionless access to massive legacy macro libraries.

3. Proposed Novel Methodologies for Advanced Workspace Automation

Based on the strengths and limitations of the analyzed frameworks, and aligning with the latest native paradigms introduced at Google Cloud Next ‘26, we propose two novel architectural approaches intended for advanced enterprise use cases.

3.1 Federated Context-Aware Routing Architecture (Federated CARA)

-

Concept: A zero-trust hybrid orchestration network that dynamically routes AI tasks based on a real-time assessment of data sensitivity, computational demand, and cross-platform interoperability requirements.

-

Architecture: A central LLM routing agent acts as a security triage engine. If a natural language prompt involves sensitive organizational data or requires orchestration across mixed backends (e.g., interacting simultaneously with Google Workspace and Microsoft OneDrive), the orchestrator routes the execution to an advanced sandboxed emulation layer. Synthesizing Mcpherson’s

gas-fakesapproach, this layer utilizes keyless workload identity federation and Application Default Credentials (ADC) to securely manage complex permissions. It natively supports multi-cloud deployments—including sovereign clouds—ensuring that while external APIs are accessed under the hood, the execution environment itself remains strictly controlled and continuously validated through self-augmenting feedback loops. Conversely, if the task requires heavy bulk processing over established legacy macros, the execution is delegated to a remote A2A subagent (synthesizing Tanaike’s Web App protocol). -

Enterprise Value: Maximizes cross-platform versatility and infrastructure flexibility while enforcing strict compliance and data governance perimeters. By focusing on advanced execution sandboxing rather than relying solely on traditional authentication barriers, it securely bridges distinct enterprise ecosystems.

3.2 Self-Optimizing Tool Caching Network (SOTCN)

-

Concept: An advanced solution to Tool Space Interference (TSI) that bridges the gap between dynamic code creation, static remote delegation, and newly standardized enterprise skill registries.

-

Architecture: Instead of writing code from scratch (which incurs API latency) or loading 160+ tools simultaneously (which inevitably degrades LLM reasoning), the SOTCN utilizes a massive “cold storage” repository of pre-validated scripts. Crucially, this architecture is designed to integrate seamlessly with Google’s newly announced official Agent Skills repository. By treating both officially published Agent Skills and custom-built Workspace scripts as modular assets, SOTCN acts as a dynamic curator. When a user issues a command, an ultra-fast semantic vector search identifies the top 3–5 most relevant skills. A local “Injection Agent” then dynamically injects only those specific functions into the primary LLM’s active Model Context Protocol (MCP) context window for the duration of that specific session.

-

Enterprise Value: Eliminates TSI entirely by maintaining a pristine, minimal active context window. By leveraging both community-driven repositories and official Google Agent Skills, SOTCN avoids the latency and debugging failure rates of real-time code generation while effectively future-proofing enterprise deployments against evolving native ecosystem standards.

4. Comparative Analysis

The table below synthesizes the structural and functional nuances of the four original methodologies alongside our two newly proposed frameworks.

5. Discussion and Conclusion

The evolution of Google Workspace automation reflects a broader industry shift toward “Agentic” design. Hawksey’s foundational work establishes how legacy scripts can be seamlessly bridged to LLMs, preempting Google’s recent Next ‘26 announcement of the official Agent Skills repository. Mcpherson’s integration of the gas-fakes emulation layer combined with the Model Context Protocol (MCP) redefines enterprise security and interoperability. Rather than simply isolating execution, Mcpherson demonstrates how advanced sandboxing, managed via mechanisms like keyless workload identity federation, enables multi-cloud versatility (supporting sovereign clouds) and cross-platform backend integration (e.g., Microsoft OneDrive). Furthermore, powered by continuous learning from over 10,000 tests, this approach proves that agents can reliably self-augment through cyclical feedback. Tanaike pushes the envelope of scalability; his dual approaches to Tool Space Interference (TSI) demonstrate that AI systems must either possess the autonomy to self-generate capabilities or the communicative protocols (A2A) to delegate them.

The proposed frameworks, Federated CARA and SOTCN, represent the logical next steps in this evolution, deeply aligning with the latest native Google Cloud capabilities. In particular, the SOTCN methodology provides a powerful extension to Google’s newly introduced Agent Skills paradigm by offering a semantic caching mechanism that programmatically eliminates context bloat while standardizing tool invocation. By optimizing for latency, context window preservation, multi-cloud versatility, and strict data compliance, these advanced methodologies outline a robust, future-proof roadmap for deploying enterprise-grade, autonomous AI coworkers that are as reliable as they are dynamic.

6. Summary

- Evolution of Automation: Workspace automation has officially shifted from rigid Google Apps Script macros to the “Agentic Era,” powered by the new Gemini Enterprise Agent Platform, which natively supports the building, scaling, and governance of autonomous agents.

- Skill Chaining (Hawksey): Utilizes the Workspace CLI to combine local Node.js execution with remote GAS deployment. This foundational approach preempted Google’s newly announced Agent Skills repository, which formalizes skills as compact, agent-first documentation to mitigate context bloat.

- Local Sandboxing (Mcpherson): Employs the

gas-fakesemulation layer and MCP to securely execute LLM-generated code locally or containerized in any cloud platform. This focus on secure isolation and standardized tooling is now mirrored at the enterprise level by Google’s native MCP support and the highly secure GKE Agent Sandbox utilizing gVisor. - Dynamic Generation (Tanaike): Utilizes a sophisticated 5-agent orchestrator to write, sandbox-test, and cloud-deploy custom tools in real-time, effectively solving Tool Space Interference (TSI). This mirrors the capabilities of Google’s newly announced Long-running agents, which autonomously execute multi-step workflows inside secure cloud sandboxes.

- Remote Delegation (Tanaike): Leverages the A2A protocol to offload massive libraries of over 160 predefined skills to remote GAS Web Apps. This community-built solution directly anticipated Google’s native A2A (Agent-to-Agent) Orchestration and Agent Registry, which now allow enterprise agents to natively discover and delegate tasks to one another.

- Proposed Method 1 (Federated CARA): A zero-trust architecture that routes AI tasks to either containerized emulation or remote A2A subagents based on the data’s security classification. This framework is perfectly positioned to integrate with the newly announced Agent Gateway and Agent Identity, natively enforcing IAM access control policies and guarding against data exfiltration.

- Proposed Method 2 (SOTCN): A semantic caching network that stores predefined tools in “cold storage” and dynamically injects only the most relevant functions into the agent’s active context window. This architecture enhances Google’s new Agent Skills paradigm by programmatically eliminating context bloat without the latency of generative code writing.