Analyzing Trends of Google Apps Script from Questions on Stackoverflow using Gemini 1.5 API

Abstract

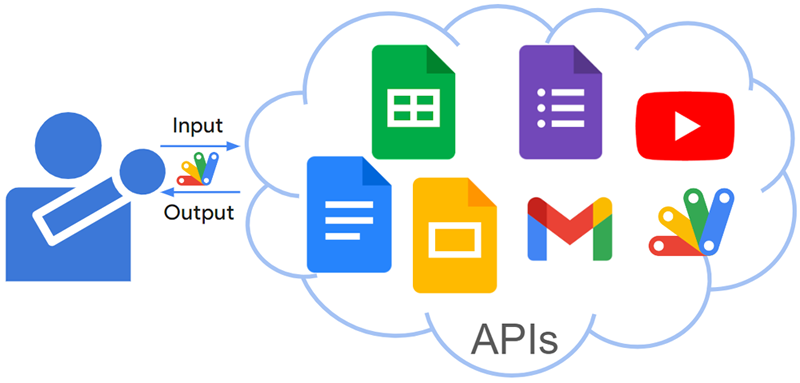

A new large language model (LLM) called Gemini with an API is now available, allowing developers to analyze vast amounts of data. This report explores trends in Google Apps Script by using the Gemini 1.5 API to analyze questions on Stack Overflow.

Introduction

The release of the LLM model Gemini as an API on Vertex AI and Google AI Studio has opened a world of possibilities. Ref The Gemini API significantly expands the potential of various scripting languages, paving the way for diverse applications. Additionally, Gemini 1.5 has recently been released in AI Studio. Ref We can expect the Gemini 1.5 API to follow suit soon.